Australia's COVID plan was designed before we knew how Delta would hit us. We need more flexibility

The problem with the plan to relax restrictions at 70 per cent and 80 per cent vaccination rates is it’s based on modelling that’s now obsolete.

The problem with the plan to relax restrictions at 70 per cent and 80 per cent vaccination rates is it’s based on modelling that’s now obsolete.

The great economist John Maynard Keynes, when accused of inconsistency on some policy, is credited with saying: “When the facts change, I change my mind. What do you do?”

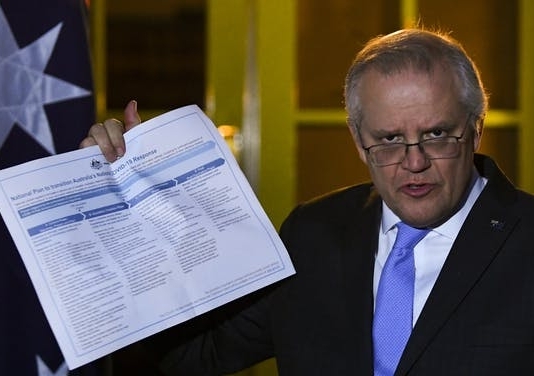

This point comes to mind in relation to the fractious debate over Australia’s “National Plan” to relax restrictions at 70 per cent and 80 per cent vaccination-rate thresholds.

This plan was agreed to by National Cabinet on August 6 — though apparently with a different interpretation for each government involved.

Underpinning it is modelling by the Doherty Institute, done in July this year. There has been plenty of argument about particular assumptions in the Doherty model. But the more fundamental problem is that it is effectively obsolete.

Ideally models of any phenomenon are based on directly relevant data. In recent years the development of techniques for “big data” (large data sets, often derived from administrative records) have yielded a wide range of new insights.

In the case of COVID-19, the data needed for such an approach include evidence on the way in which changes in conditions change the “Reff” — the effective reproduction rate of the virus.

The Reff is a number that indicates the average number of new cases generated by each existing case. It is influenced by vaccination rates, movement restrictions and the capacity of testing and tracing measures to locate and isolate those infected. To avoid an uncontrolled pandemic we need to keep the Reff below 1 most of the time.

When the Doherty Institute undertook its modelling there was essentially no data for Australia relevant to the scenario policy makers are now contemplating.

There had been no sustained outbreak of the Delta variant, and only one extended lockdown to give evidence on the effectiveness of various measures. So the Doherty Institute had to use a theoretical model with parameters derived from a combination of overseas evidence (from countries with experience very different to that in Australia) and the best guesses its experts could make.

We now have a great deal of data on the Delta variant and the effectiveness — or ineffectiveness — of various restrictions, and the extent to which people change their behaviour in an outbreak.

Read more: Vital Signs: we're doing well despite Delta, but 3 major economic challenges loom

In particular, we have learned testing and contact tracing becomes markedly less effective once case numbers grow into the hundreds per day, as has happened in NSW and Victoria. The Doherty Institute has reportedly revised its modelling to take account of this, and presented revised advice to National Cabinet, but neither the model results nor the changes in modelling have been made publicly available.

By the time we reach the vaccination levels nominated in the National Plan, probably in October or later, we will have a good deal more data. Crucially, we will know if the effective reproduction rate is above 1 (indicating exponential growth) or below (indicating contraction of case numbers).

Rather than sticking to a predetermined schedule, we need to be willing to adjust our policy responses in the light of the latest data, and the most up-to-date models we have available.

This situation is somewhat analogous to climate change modelling.

The fundamental science underlying climate change has been known for more than 150 years. As long ago as 1896, the Swedish chemist Arrhenius estimated that doubling the global concentration of carbon dioxide would raise the earth’s average temperature by 5℃.

When global warming became a concern in the 1980s, however, we had little more to go on than simple simulation models, with no certainty as to whether they were realistic representations.

That changed over the 1990s. Climate scientists refined general circulation models of the atmosphere and ocean, running on supercomputers and capable of incorporating vast quantities of data, and began modelling effects on soil and vegetation. Also, we are increasingly experiencing the predicted consequences of climate change, learning the hard way that the models were, if anything, conservative in their predictions.

With COVID-19 we don’t have time to develop the massive models that would make optimal use of the data that is daily becoming available. But we can use that data to improve our understanding of the way the virus spreads and the way our behaviour responds.

To some extent this is already happening with work done by the Burnet Institute, which is providing weekly updates to the NSW government. But this work is not being released publicly, and it appears the NSW government’s announced policies may be contrary to health advice based on the modelling.

The evolution of the pandemic is an interaction between the mutating characteristics of the virus and the way in which humans respond, interactively and collectively. For this reason, it is a mistake to let disciplinary boundaries decide whose advice should be heeded, and whose ignored. Epidemiologists, public health researchers, economists and other social scientists all have relevant expertise. In this emergency, it should be a case of “all hands to the wheel”.

In these rapidly changing times it makes no sense to fix a policy plan based on a months-old model.

We need to respond flexibly to new evidence as it comes to hand. We need to consider all kinds of data, including new evidence on the transmissibility of the virus, estimates of the likely uptake of vaccines, and observations on the way restrictions reduce movement around our cities.

What we don’t need is more speculation about the hypothetical dates and vaccination rates at which various restrictions will be lifted (or perhaps, looking at overseas experience, reimposed). Let’s focus on the facts as they are now.

John Quiggin, Professor, School of Economics, The University of Queensland; Richard Holden, Professor of Economics, UNSW, and Steven Hamilton, Visiting Fellow, Tax and Transfer Policy Institute, Crawford School of Public Policy, Australian National University

This article is republished from The Conversation under a Creative Commons license. Read the original article.