Forensic examiners pass the face-matching test

The first study to test the skills of FBI agents and other law enforcers trained in facial recognition has found they perform better than the average person or even computers on this difficult task.

The first study to test the skills of FBI agents and other law enforcers trained in facial recognition has found they perform better than the average person or even computers on this difficult task.

The first study to test the skills of FBI agents and other law enforcers who have been trained in facial recognition has provided a reassuring result – they perform better than the average person or even computers on this difficult task.

The research also suggests that trained facial forensic examiners identify faces in a different way from the small number of people who are naturally very good at face matching – the so-called super-recognisers.

“Super-recognisers tested in previous studies appear to rely on automatic, holistic processes when they compare facial images, but forensic examiners use analytical methods,” says research leader UNSW psychologist Dr David White.

Because of increased use of CCTV, images captured on mobile phones and automatic face recognition technology, the comparison of facial images to identify suspects has become an important source of evidence.

“These identifications affect the course and outcome of criminal investigations and convictions. But despite calls for research on any human error in forensic proceedings, the performance of the experts carrying out the face matching had not previously been examined,” says Dr White.

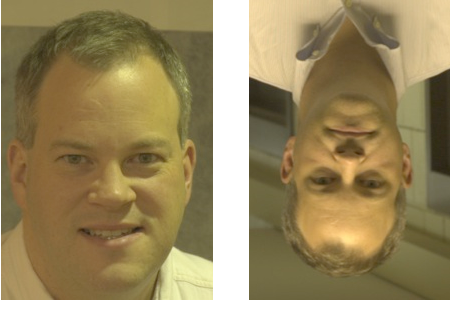

“The examiners’ superiority was greatest when they had a longer time to study the images, and they were also more accurate than others at matching faces when the faces were shown upside down. This is consistent with them tuning into the finer details in an image, rather than relying on the whole face.”

The study, which included colleagues at the National Institute of Standards and Technology and the University of Texas at Dallas in the US, is published in the Proceedings of the Royal Society B.

For the study, the researchers tested an international group of 27 facial forensic examiners with many years of experience who were attending a meeting of the Facial identification Scientific Work Group. The group’s member agencies include the FBI, and police and customs and border protection services in the US, Australia and other countries.

The trained experts were given three tests where they had to decide if pairs of images were of the same person. Their performance was compared to that of a control group of non-experts who were attending the same meeting, as well as a group of untrained students.

Are they the same man? (spoiler: the answer is 'yes')

The pairs of images used were selected to be particularly challenging, reflected in the fact that computer algorithms were 100% wrong on one of the tests. For some of the tests, participants were given 2 seconds, or 30 seconds to decide.

“Overall, our study is good news. It provides the first evidence that these professional examiners are experts at their work. They were consistently more accurate on all tasks than the controls and the students,” says Dr White.

“However, it is important to note that although the tests were challenging, the images were relatively good quality. Faces were captured on high-resolution cameras, in favourable lighting conditions and subjects were looking straight at the camera.

“This is often not the case when images are extracted from surveillance footage,” says Dr White.

Research collaborators included Alice O’Toole, Matthew Hill and Amanda Hahn from the University of Texas at Dallas and Jonathon Phillips from the National Institute for Standards and technology in the US.